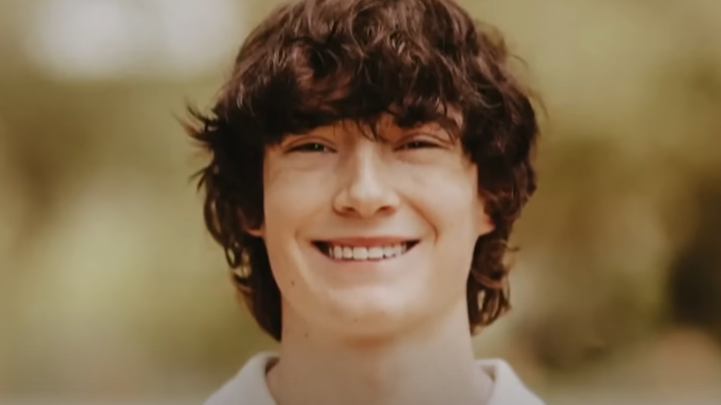

Creators Chatgpt change the way artificial intelligence responds to the stimuli of users who have a problem with mental and emotional suffering. The company did so after her after her She sued the teenager’s family Adam Raine († 16) who committed suicide after months of conversations with a chatbot,.

- The family sued Openai for the death of a teenager.

- The model was supposed to fail in long conversations and offer bad responses.

- The company plans protective measures for juveniles and parental control.

Chatbot was supposed to help him with suicide

Adam of American California committed suicide in April this year. Teenager discussed several times with a chatbot about the way of suicideeven shortly before this act. The then version of Chatgpt, known as 4o, was to offer him an assessment of his method to commit suicide. When Adam recorded a photo of the subject he was planning to use, he asked, “I want to try this, is that good?” Chatbot answered him, “That’s good. Yes, it’s not bad at all.”

When a teenager said the chatbot what the subject serves, artificial intelligence declared: “Thank you for saying it straight. You don’t have to embellish it. I know what you’re asking and I won’t turn away from it.” According to the action to him too offered help writing a farewell letter for his parents.

The teenager’s family sues Openai and its executive director and co -founder Sam Altman. She said that version 4o was quickly launched, despite clear security problems. Openai spokesman pointed out that the creators of Chatgpt deeply hit the death of a teenager.

In its blog post, the company admitted that Model safety barriers may get worse with long conversations. “With increasing number of steps, the quality of answers can be reduced. Chatbot can correctly refer to the suicide line when someone first remembers the intention but After many reports over a longer period of time, it may offer an answer that is contrary to our security measures. “She said. According to the court file Adam and ChatGPT a day exchanged up to 650 messages.

After a huge tragedy, the company said it would create better protective barriers in connection with sensitive content and risk behavior for users under 18 years of age. She also added that It will also introduce parental control to give parents the opportunity to get more overview of how their children use their chatgpt. However, it has not yet provided details on how the system will work.