ZAP // StockCake; adengoled / Depositphotos

Even if there are no secret meetings or illegal agreements between algorithms, when they are tasked with setting prices in real time, they can cause them to rise worryingly. Game Theory explains why — and how the solution might involve taking “regret” out of the equation.

Imagine a small town with two merchants selling a certain product. Customers naturally prefer cheaper products, so merchants are forced to compete to offer the lowest price.

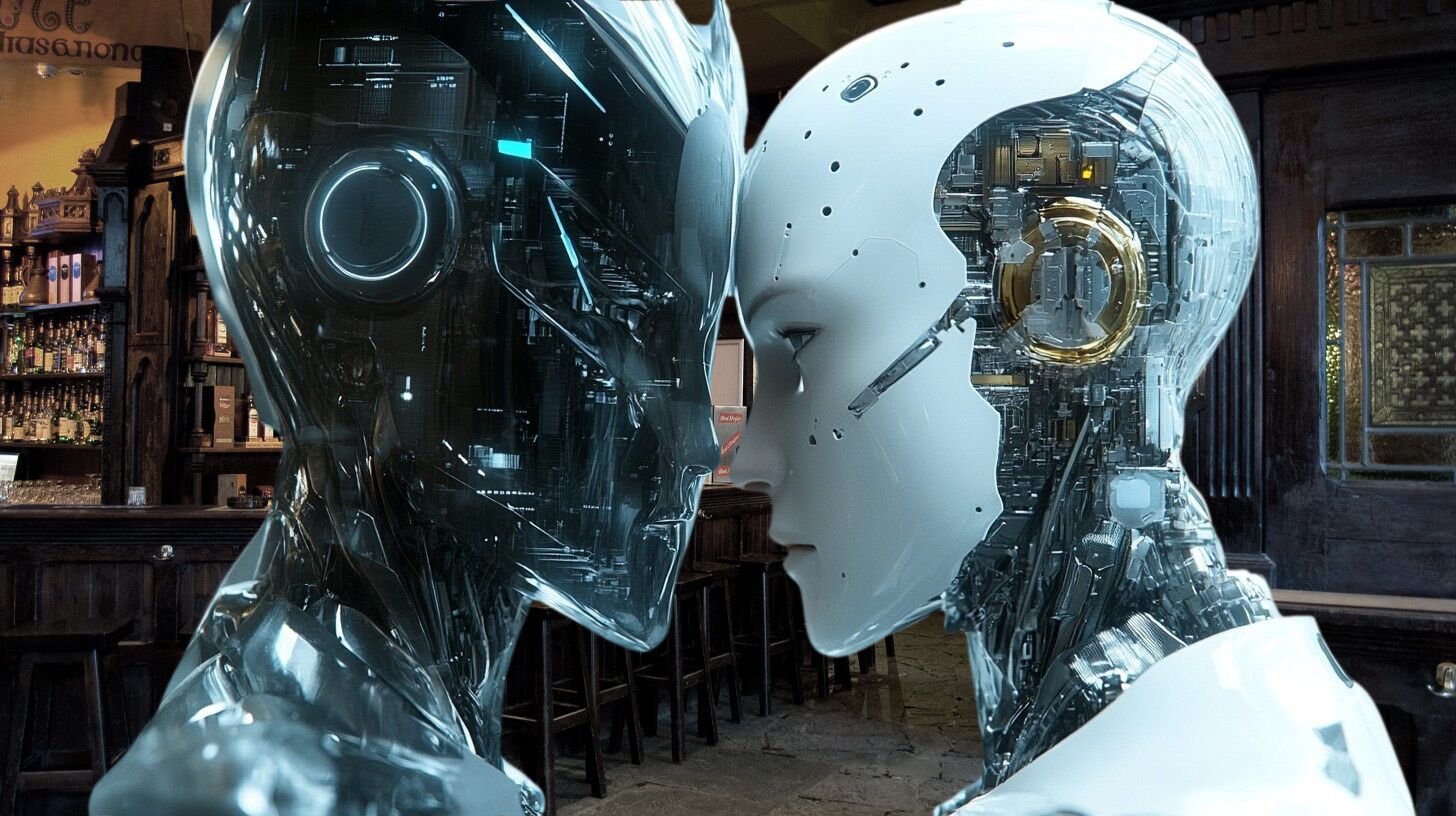

Fed up with your slim profitsthe two traders meet one night in a bar to discuss a secret plane: If they raise prices together instead of competing, they can both make more money.

But this kind of artificial and intentional price fixingknown as collusionis illegal. Traders decide not to take risks, and everyone continues to benefit from cheap products.

For more than a century, laws in Europe and the USA, in general terms, have followed this model: ban these backroom deals and thus guarantee fair prices.

Nowadays, however, it is no longer that simple, explains . Across vast areas of the economy, sellers increasingly rely on computer programs called learning algorithmswhich repeatedly adjust prices in response to new data about the state of the market.

These algorithms are often much simpler than those of “deep learning” that currently fuel Artificial Intelligence, but remain subject to unexpected behaviors — which often result in higher prices being set.

So how can regulators ensure that algorithms set fair prices? The traditional approach no longer worksbecause it is based on the ability to detect explicit collusion.

“Algorithms definitely they don’t go drinking with each other”, says computer scientist to Quanta Aaron Rothresearcher at the University of Pennsylvania and co-author of a recent study on collusion between algorithms.

For more than a century, enforcement against cartelization was based on a simple idea: prohibit explicit combinations between competitors. If two companies meet in a “dark corner” to agree prices, it’s a crime; if they do not, it is assumed that the competitive market will keep prices low.

But, in a world where companies use algorithms learning to adjust prices in real time, this logic starts to fail.

In 2019, a widely cited study showed that competing algorithms, in a simulated market, were able to “learn” to tacitly collude: whenever one lowered the price, the other retaliated with an even more aggressive drop, creating the implicit threat of a price war.

The end result were higher prices: the mutual fear of triggering this downward spiral led algorithms to keep prices high.

In your , recently made available in pre-publication on arXivAaron Roth’s team showed that even seemingly benign algorithmsdesigned only to maximize their own profit, can, under certain conditions, end up leading to higher prices for consumers.

The key to this phenomenon lies in , a branch of applied mathematics that studies situations of strategic interaction between two or more “players”, where Everyone’s choices affect the outcome for everyone — and which allows us to know, for example, whether to split the bill equally.

A central concept of this theory is that of “balance”a situation in which neither party has an incentive to change of strategy, given the behavior of the other.

Many learning algorithms seek precisely this: to continually adjust until there is no obvious way to improve the result.

A particularly important type of algorithm is the so-called “no-swap-regret”, which, in a simplified way, guarantees that even after several game cyclesthe agent Won’t look back with regret and he will not conclude that he would have gained more if he had systematically substituted one action for another.

A team of game theorists proved, in a paper published in 2000, that when two algorithms of this type compete against each other in any game, they end up converge to a specific form of equilibriumwhich corresponds to the best possible solution in a single-round scenario. In single-round games, “threats” do not work, because there is no “tomorrow” to carry them out.

In one published last year, Jason Hartlinea researcher at Northwestern University, applied these results to a competitive market model in which companies can adjust prices round after round.

The conclusion was optimistic: if both competitors use algorithms no-swap-regretprices tend to be competitive, and collusion becomes impossible. The problem arises when one of these algorithms faces a opponent of another kind.

In their recent study, Aaron Roth and colleagues looked at what happens if a no-swap-regret compete with a “non-responsive” strategy: instead of reacting to the rival’s behavior, the seller chooses a random pricefollowing previously defined fixed probabilities.

When researchers calculated what the “optimal” probabilities would be for this non-responsive strategy, found something unexpected: the best way to maximize profit against an algorithm no-swap-regret is to assign a very high probability at very high pricesand lower probabilities at a wider range of lower prices.

In practical terms, this behavior forces the learning algorithm to also raise pricesunder penalty of falling behind on average. From time to time, the non-responsive player lowers the price and takes the opportunity to attract customers, but spends much of the time charging high prices.

At first glance, the authors still thought that this scenario sounded artificial. Wouldn’t a seller who saw his rival make more profit simply try to change strategy?

But one mathematical analysis shows otherwise: when these two types of algorithms confront each other, the system enters an equilibrium. Both have similar profits and as high as possible given the strategies in use. Neither side has an incentive to change its algorithm.

Consumers, however, are always stuck with high prices — a typical outcome of collusion, but without any explicit agreement, threat or coordination; and For regulators, this creates a dilemma. It is not enough to ban sophisticated algorithms that appear “too smart” or capable of communicating with each other.

Hartline proposes a radically simple solution: ban all algorithms price except those of no-swap-regretwhich, when used by allpush prices down. There are even recent methods to check whether an algorithm has this property without needing to inspect its code.

But even this approach does not solve all casesespecially when algorithms compete with humans; Furthermore, some experts dispute the idea that what Roth describes is, technically, collusion, because it always presupposes a real possibility of “choosing not to collude”.

As pricing is increasingly delegated to algorithms, understanding when and how pricing arises unjustifiably high pricesand what to do about it, is becoming a central question of contemporary economic policy.

Meanwhile, we wait for the day when learning algorithms evolve againperhaps with the help of the now so popular Artificial Intelligence, and find themselves in a bar to have a few drinks and agree prices — with little hope that they will do so to download them.