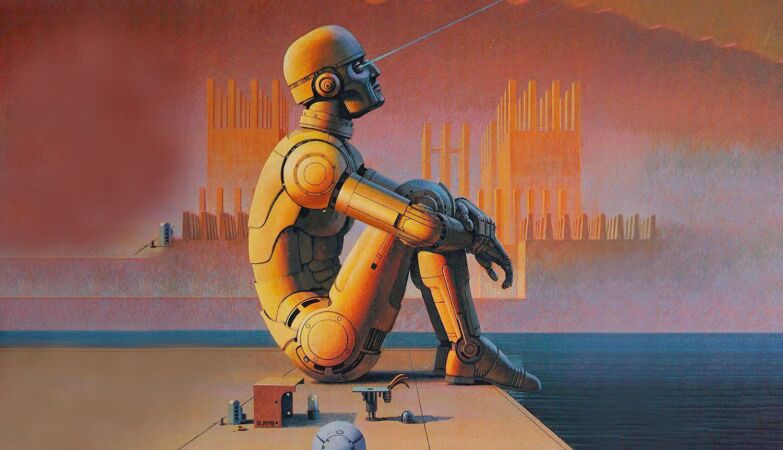

ZAP // Roc

“Robot Visions” by Isaac Asimov, 1990 (cover detail, expanded by AI)

The conflict in Iran — but also the war in Ukraine — shows not only that AI is radically changing the economics of war, which could be good news, but also that we may be heading towards a kind of “Chernobyl moment.”

We may soon face a disaster that forces us to realize, too late, that we should have established common rules to frame a technological development that we ourselves triggered.

Until Dario Amodei, founder of , who appears committed to acting to prevent Armageddon, recognizes that doesn’t have the answer that we desperately need.

One of the most interesting attempts to regulate the use of AI could have been the one outlined, during World War IIby a doctoral student at Columbia University who, at that time, was temporarily working for the United States Navy.

It was called Isaac Asimov and, in his first story, Runaround (1941)formulated three laws that remain surprisingly inspiring for anyone thinking about how to solve the intellectual and political problem that AI represents in the context of war: Asimov’s famous three Laws of Robotics.

Laws of Robotics

- A robot may not harm a human being nor, through inaction, allow a human being to come to harm.

- A robot must obey orders given by humans, except when those orders conflict with the First Law.

- A robot must protect its own existence, since that such protection does not conflict with the First or Second Law.

Later, Asimov added a further She Zero:

A robot may not harm Humanity nor, through inaction, allow Humanity to come to harm.

Unlike more recent attempts by the OECD and the European Union to create regulation, Asimov’s laws are distinguished by a admirable concisenessit says Francesco Grilloresearcher at Università Bocconi, in Italy, and director of the think tank Vision, in an article on .

Asimov’s famous laws establish that a robot, what we today call an “artificially intelligent agent”, should never cause harm to a human beingnor allow, through inaction, this damage to occur.

It always has to obey orders given by human beingss, unless such orders conflict with the first prohibition. And finally, you should always always protect your own existence — unless this conflicts with the first and second provisions.

In his short story, Asimov himself shows how these three laws can generate internal contradictions and lead to the “paralysis” of robots. Still, Asimov’s three principles can continue to be useful as a starting point for the strategy we need now, says Grillo.

Anthropic takes a stand

The greatest merit of the note that Dario Amodei recently wrote about the dangers of a technology that is still in its adolescence is the recognition that Anthropic, the company he founded, is using its own great language model, , to develop more advanced versions of yourself.

A AI is generating even smarter robotsand this brings us closer to that “” theorized by the great mathematician John von Neumann: the moment when artificial intelligence surpasses human intelligence and makes us irrelevant.

If this technology is a teenager, it is growing very fast and will soon escape the control of its creator.

Amodei does not, however, appear to have a concrete proposal on how to manage this problem. He said Anthropic’s contracts with the U.S. Department of War should never include the use of the company’s models to reinforce be it “mass domestic surveillance”, or “fully autonomous weapons”.

It is a requirement that put Anthropic in a tough confrontation with the United States government. Still, it seems like a relatively limited response, covering only one dimension of a much broader problem.

Amodei mainly focuses on the safety of citizens North Americans, when they are currently people in other parts of the world most affected by the use of autonomous weapons. We need a bolder vision — and Asimov’s intuitions can help, says Grillo.

New rules

One way would be require all AI model creators that introduced, into their fundamental codes, three simple and bold commandsalong the lines of these, Asimov-style:

- You will never kill a human beingexcept in self-defense;

- You will always look act for the good of humanityunless this provision implies a violation of the first command;

- When you have doubts that your actions may violate the first or second commands, you will choose inaction and ask for instructions.

Most likely, this initiative will have to come from a group of countriesfollowing a model similar to that of nuclear weapons non-proliferation treaties. And it would be desirable to debate new ideas before we are forced to do so by some unintended nuclear consequence powered by AI.

Like all other attempts to regulate a future that we cannot even imagine yet, these three commands will have limitations.

A robot could have refused to kill former Iranian leader Ali Khameneibut this could be an acceptable price if it means avoiding creating a precedent for other discretionary and dangerous interpretations.

The robots may not always be able to identify human beingss successfully, as Asimov himself acknowledged in later texts, but this may well be one of those intellectually fascinating problems that models designed to interpret human language will ultimately solve.

Most importantly: it will require not only information, but also a lot of information. wisdomto understand what is good for humanity.

The robots could often end up stoppedwaiting for instructions. Even so, efficiency is not a religion that we have to follow when the challenge is linked to the survival of our species.

Understanding what increasingly appears to be one of the greatest technological revolutions ever requires careful reflection and ability to anticipateconcludes Grillo.