“How much do you think you should pay me for using my name?” asks Nilay Patel

*Por Joshua Benton

Sometimes you just get lucky with the editorial calendar.

About 1 month ago, the CEO of , , agreed to be a guest on , the podcast presented by the editor-in-chief . Superhuman is the company formerly known as , which is now just one of its AI-focused productivity tools, and Mehrotra and Patel would already have plenty to discuss.

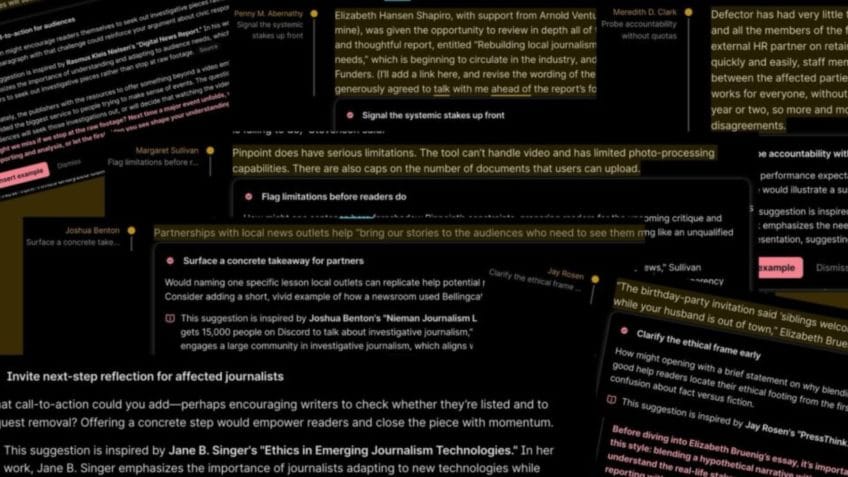

But then a little-known feature of Grammarly called “”which offered users suggestions for improving what they were writing. But the proposed changes were presented as coming from real writing experts — journalists, novelists, academics and more. It wasn’t the AI suggesting a plot twist — it was Stephen King. It wasn’t AI proposing a clearer way to explain science — it was Carl Sagan. And it wasn’t AI pointing out a way to improve its technology column.

Writers, as one might expect, were not pleased to see their names being used to lend credibility to AI editing suggestions. () Technology journalist Julia Angwin filed a lawsuit, seeking compensation. At that moment, Mehrotra had already “Expert Review” after 8 months, saying he wanted “apologize and acknowledge that we will rethink our approach going forward”.

Mehrotra still participated in the . After a brief conversation about Superhuman’s product strategy, Patel got straight to the point:

Patel: “You don’t have our permission to use our names to do this. There were little check marks next to the name that indicated it was somehow official. People didn’t like that, I didn’t like that, and you removed the feature. Talk about the decision to release this feature with names you didn’t have permission for and the decision to take down the feature.”.

Mehrotra apologizes again: “This caused me a lot of pain as I felt that we delivered less than expected”notes that the “Expert Review” was not especially popular and that “the resource was not good. It was not good for experts, it was not good for users”.

Mehrotra: “For some of the [usuários do Grammarly]the people they want feedback from are the people they admire. They are the experts of the world, they are the people they try to look up to and try to model. They try to do this today with LLMs. They go to ChatGPT and Claude and say, ‘What would Nilay think about my text?’ This was the inspiration for what the user was trying to do.

On the other side was what the experts were trying to do. As we formed our strategy here, turning Grammarly into a platform, the first people I called when thinking about this were a set of experts. I spoke to some prominent YouTubers, I spoke to a very well-known book author, and they all told me the same thing. It’s a very difficult world for experts right now. It’s very difficult to create a connection. If you are an author, your way to reach your fans is to keep publishing more and more books. And everyone heard what we were doing and said, “It would be really amazing to develop an ongoing connection with my fans. What happens when they close my book? Can I still be with them and help them along the way?” It seems like the world has turned against them, with AI digests taking a huge chunk of their traffic and so on. That seems like a much better way to handle it.

That was the inspiration behind it. The team and resource did not deliver. They didn’t deliver on either side, actually. We ended up with an experience that was quite suboptimal for the user and obviously suboptimal for the expert. The fundamental reason is something you said last week, which is that it’s very difficult to distill what you would do as an editor based on the outcome of your published work. It’s very difficult for AI to do this. We need your involvement to make this a good resource.”

To which Patel gave a very direct response:

Patel: “Okay. How much do you think you should pay me to use my name?”.

Mehrotra’s answer is, in essence, attribution and a link.

Mehrotra: It’s very important to think about attribution and think about impersonation, and so on. As an expert, there is an exchange you make on the internet. The idea is that when you publish content, myself included, you hope people use it. You want to refer to other people’s content. You want people to link to you. You really hope they attribute it to you when they do this. When someone uses your content, should they attribute it to you? Of course. And to attribute it to you, you need to use your name.

There’s a different line, which is: Should people be able to impersonate you? And I think that’s a very different standard. And we saw the process. We respectfully believe the allegations are without merit. The idea that the resource is impersonation is a huge exaggeration. Each mention was very clear: “This is inspired not only by this person, but also by a specific work by this person, with a clear attribution link back to them”.

It’s true that naming specific people can be a powerful way of shaping an LLM’s response. Many prompts start by telling Claude something like: “You are ________, the world’s greatest expert on ___________.” You can ask ChatGPT: “Rewrite this essay in a lean, restrained style, using clear, direct language and short sentences, favoring simple vocabulary over elaborate constructions, emphasizing concrete details and action over explanation, avoiding abstraction and sentimentality, and conveying emotional depth indirectly through precise, unadorned prose.” Or you can simply type “Rewrite this like Ernest Hemingway” and save some keystrokes.

But does this LLM’s response mean that Hemingway’s estate is now entitled to receive money? And the “This suggestion is inspired by Joshua Benton’s ‘Nieman Journalism Lab analyses’” Does this mean Mehrotra owes me dinner?

to hear the intense debate about how, in Patel’s words, “people don’t understand the difference between copyright and trademarks and names and image”and that “AI is collapsing these differences faster than ever before”.