The United States is entering a new phase of strategic competition, in which artificial intelligence is no longer an emerging capability but a decisive element of military power. In this ongoing AI arms race, speed matters. Capacity matters. But most of all, control matters. That’s why anyone paying attention to US national security should be concerned.

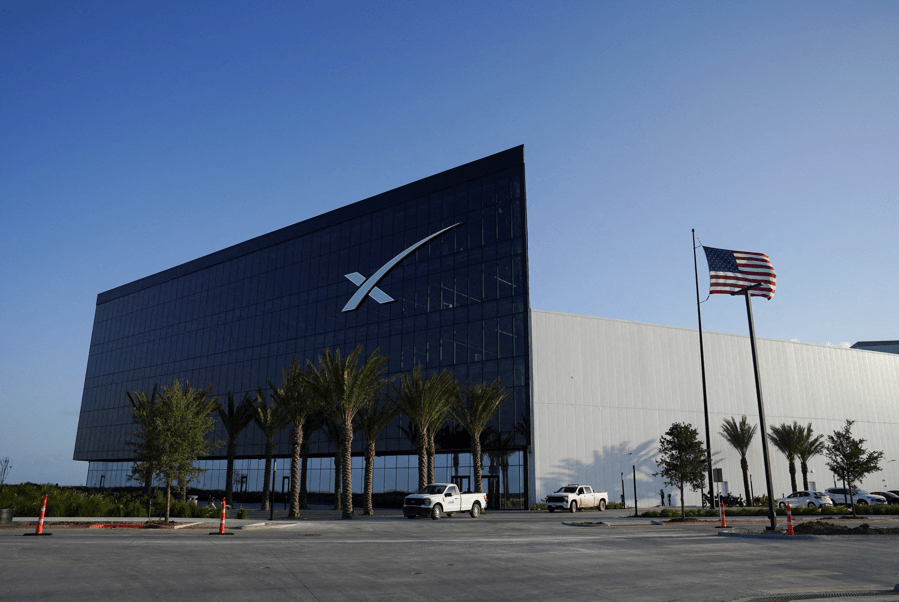

At the heart of the dispute is a simple but profound disagreement: who decides how advanced AI systems are used in a military context. Anthropic, developer of the Claude and its super-powerful Mythos model, tried to impose limits on the use of its technology, drawing red lines around certain applications. The Pentagon, for its part, has insisted that it must maintain the ability to use AI tools for all lawful purposes in the country’s defense. When these positions proved irreconcilable, the relationship collapsed.

Also read:

Continues after advertising

Anthropic ended up being classified as a supply chain risk, and the War Department was forced to look for alternatives to obtain AI capabilities. Since then, details about its Mythos model — considered “too dangerous” for public release — have come to light that add new and alarming concerns.

According to reports, Mythos is capable of autonomously identifying as-yet-unknown cybersecurity vulnerabilities and weaponizing them, which would open a clear path for cyber criminals without adequate safeguards. The new tool is potentially so powerful that Anthropic itself has restricted access to it.

This episode should serve as a warning because it demonstrates how the current structure of the US AI ecosystem — a black box, based on closed and opaque systems — is fundamentally misaligned with the requirements of national defense.

Today, the Pentagon buys access to AI capabilities but does not control them. The training, testing, and ongoing development of these models remain firmly in the hands of private companies, which have their own governance models, risk tolerances, and business incentives.

This reality creates a dangerous dynamic: it gives a small number of private companies, without direct accountability, an effective veto over how the United States can employ one of the most decisive technologies of our time. This is not a sustainable model for a constitutional republic. Nor is it a viable basis for military dominance.

A system constrained by external approval processes, changing corporate policies, or the risk of sudden disruption is a system unable to keep up with the pace that modern warfare demands. And in a strategic competition defined by rapid, repeated cycles measured in weeks—not years—these limitations do more than slow the United States down. They create gaps.

Continues after advertising

China and its aligned partners, for example, are moving aggressively to deploy large-scale AI capabilities, leveraging open source models that can be adapted to a wide range of military and intelligence applications. Systems like DeepSeek are not subject to the same corporate governance structures that shape American companies.

They are designed to be modified, expanded, and integrated into a broad ecosystem that includes not only the Chinese military apparatus, but also a growing network of partner countries at odds with the United States.

This creates an asymmetric threat. While the United States debates permitted uses of AI through contracts with private vendors, its competitors are building flexible, state-aligned systems that can be quickly adapted to operational needs. If this gap persists, the US risks finding itself at a significant military disadvantage.

Continues after advertising

The solution is not to abandon the private sector, which continues to be an extraordinary source of innovation and technical leadership. Nor is it to dismiss ethical considerations, which must remain central to how the United States approaches the use of force. But this means recognizing that the current model—in which the government rents access to closed, proprietary systems that it does not fully control—is inadequate to the demands of strategic competition.

Washington needs to start investing in a different approach: the development of open-source, high-performance, secure, and adaptable AI models that the U.S. government and its closest allies can control, audit, and deploy without external constraints.

None of this eliminates the need for careful safeguards. There are important and legitimate debates to be had about the role of AI in warfare; from autonomy and targeting to surveillance and escalation. But these debates must be led by elected officials and military leaders accountable to the American people, not dictated by the acceptable use policies of private companies.

Continues after advertising

This strategic realignment can take several forms. It may involve government-led model development, partnerships with trusted research institutions, or the creation of models with open parameters designed specifically for defense applications.

It could include structures between allies that ensure interoperability while preserving national control, as well as new acquisition strategies that prioritize transparency and modifiability over convenience.

Regardless of the path chosen, however, success will depend on getting the mechanism right.

Continues after advertising

The United States has long understood that it cannot outsource the foundations of its security. We build our own ships. We design our own weapons. We maintain command of the systems that sustain our military advantage. Artificial intelligence should be no different.

Building effective public-private partnerships that serve national defense will require more than technical capability — it will require trust, integrity, and strong processes.

This means establishing clear safeguards, aligning incentives, and ensuring that both government and industry share responsibility for the risks and outcomes of using these systems.

If structured well, this model can harness private sector innovation while preserving government authority over how these capabilities are ultimately used.

The Anthropic episode runs the risk of not being an anomaly, but a harbinger. Unless we act now to ensure that the United States — and its allies — have access to AI systems they can actually control, it too may prove to be a warning we ignore.

Retired Maj. Gen. Robert F. Dees is a former U.S. Army commander and national security expert who has led troops from the 101st Airborne Division to U.S. Forces Korea and a joint U.S.-Israeli missile defense task force over a 31-year military career.

The opinions expressed in Fortune.com opinion pieces are solely those of the authors and do not necessarily reflect the opinions and beliefs of Fortune.

2026 Fortune Media IP Limited