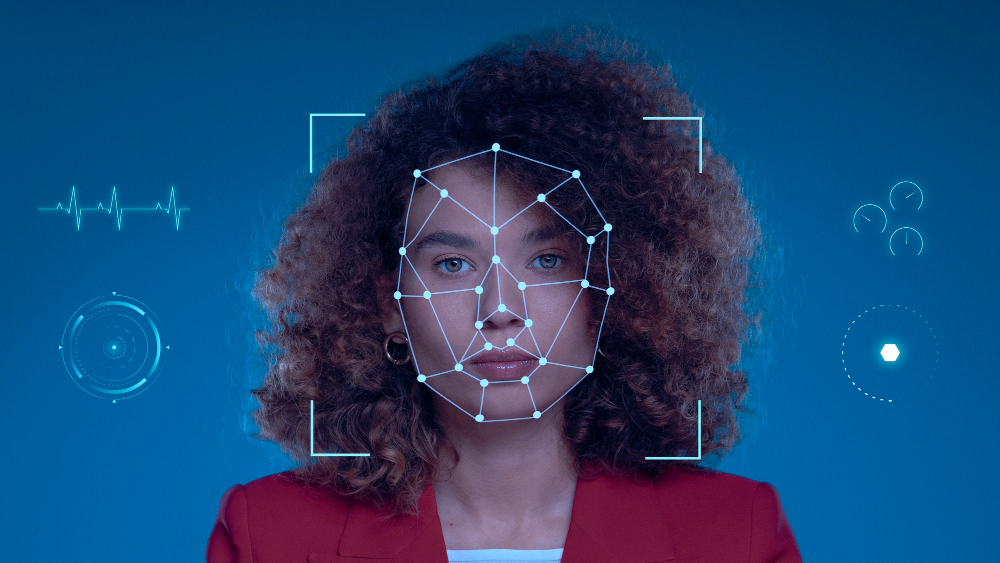

Deepfakes are no longer a niche technological curiosity and have become one of the most real and sophisticated threats in the cyberwar scenario.

For a long time, the idea of having your voice or image cloned and used against you seemed like something out of a science fiction movie. Something distant, restricted to large Hollywood productions or special effects laboratories. However, the reality of 2026 is different: with the exponential advancement of Generative Artificial Intelligence (GenAI), deepfakes are no longer a niche technological curiosity and have become one of the most real and sophisticated threats in the scenario of cyberwars and modern digital manipulation.

And here it is essential to make a clear distinction: when we talk about deepfakes in this article, we are not referring to fun social media filters that swap faces or simple video edits for entertainment. We are dealing with something much more serious, technical and dangerous: the creation of ultra-realistic synthetic media – videos, audios and even real-time interactions – that are technically indistinguishable from the original content to the untrained human eye and ear.

These creations are not just tools for fun; they have been transformed into high-precision digital weapons. They are used to deceive security systems, manipulate public opinion on a large scale and carry out cyber attacks that target not only data, but the very trust in institutions and human relationships.

AI as a double-edged weapon

Artificial Intelligence, which was born with the promise of revolutionizing security and making human life easier, has unfortunately become a tool of power in the hands of criminals and state actors. By 2026, security reports indicate that the creation of deepfakes is expected to grow three to five times compared to the previous year, making detection an unprecedented technical challenge.

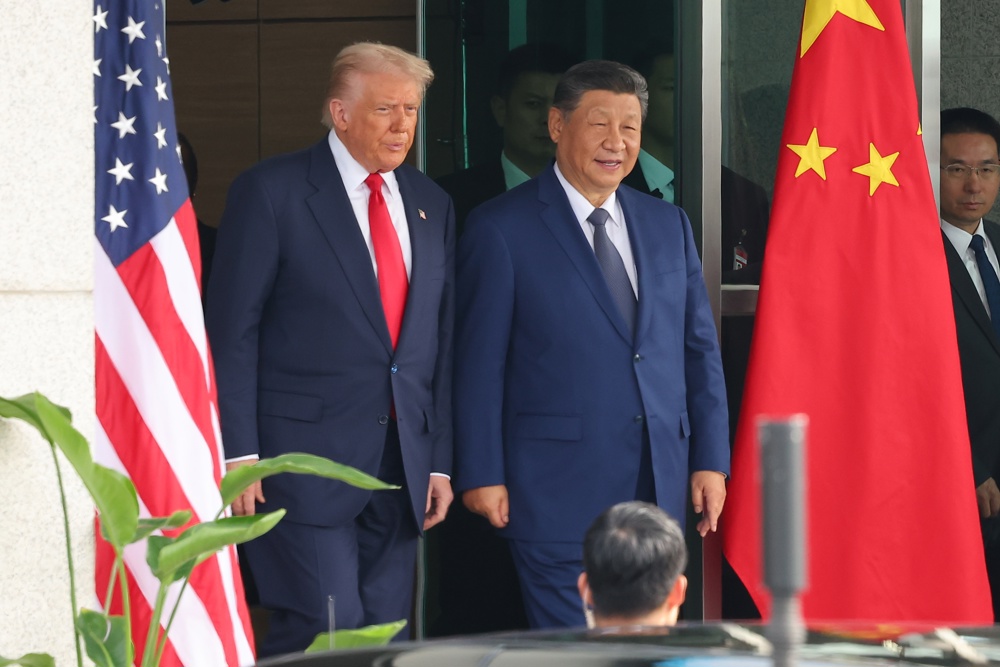

On the offensive side, AI is employed in hybrid warfare tactics. Disinformation campaigns are no longer just texts on social media; now, they involve videos of world leaders or CEOs of large companies issuing false orders, or controversial statements, capable of crashing stock markets or inciting civil strife in a matter of minutes.

Another critical point is the advancement of financial fraud. The so-called “vishing” (voice phishing) has evolved to a level where the cloned voice of an executive can be used in a telephone call to authorize million-dollar bank transfers. It’s no longer just a fake email; it’s the voice you know, with the correct accent and the expected urgent tone, speaking directly to you.

Attack on the human mind

The big negative innovation of 2026 is the integration of deepfakes with precision social engineering. Attackers use AI algorithms to mine data from a victim’s social media and public communications. With this information, the AI generates a personalized script and a deepfake that explores that person’s specific emotional triggers.

Imagine receiving a video call from a family member in trouble. The image is perfect, the voice is identical and the context of the conversation makes perfect sense with what you discussed the day before. This level of personalization makes human resistance almost nil, as it attacks our most basic instinct: trust in those we love or respect.

Why are traditional defenses failing?

Many organizations and individuals still rely on defenses based on single, static signals. Basic proof-of-life checks (such as asking the person to blink or turn their face) or simple biometric comparisons are no longer effective. In 2026, deepfakes are generated in real time and injected directly into the video drivers of virtual cameras, fooling even security systems that were previously considered robust.

Technology has evolved to a point where AI can simulate the blood pulse in the skin of a synthetic face or the micro-movements of the eyes that real humans make. What we need now is multi-layered identity intelligence that analyzes not just the image, but device behavior, network health, and behavioral patterns that AI cannot yet perfectly replicate.

Technological counter-attack

To combat this threat, the security industry is developing what we call Multimodal Defense. Systems like Deepsight, from Incode Technologies, represent the state of the art in this area. They don’t just look at the face; they analyze the consistency of light, the physics of movement, and even the depth of the image in microseconds.

These tools perform thousands of checks in less than 100 milliseconds. They look for digital inconsistencies that are invisible to the human eye but obvious to an algorithm trained to detect other algorithms. It’s a war of code against code, where speed and accuracy determine who keeps control of the truth.

How to protect yourself?

In a world where the lines between the real and the artificial are increasingly blurred, trust must be treated as the most valuable and scarce currency. Protecting your digital identity is, today, a matter of financial and emotional survival.

Some practical and essential measures for your daily life:

- Be wary of the obvious: If you receive an unusual request for money or sensitive information, even if it comes via video or audio from someone you know, stop and validate through another communication channel.

- Multi-Factor Verification (2FA) is mandatory: Never trust just one password. Use authentication apps or physical security keys. Facial biometrics alone are no longer a guarantee of secure access.

- Continuous Digital Education: Knowledge is your best armor. Understand that the technology to deceive you exists and is accessible. Share this knowledge with more vulnerable family members, such as the elderly and children.

- Privacy Audit: Periodically review what you share publicly. The less data about your voice and image is available for AI scraping, the harder it will be to create a convincing deepfake about you.

In 2026, the discussion about AI governance and regulation of synthetic media is central. Governments are beginning to require mandatory digital watermarks on any AI-generated content, allowing browsers and applications to automatically identify what is real and what is synthetic.

However, laws tend to move slower than technology. Therefore, the individual defensive posture remains the most effective. Cybersecurity in the age of AI is not a product you buy, but a stance you adopt.

Digital surveillance is control, and control is risk. Leaving passive mode and taking control of your digital security is the first step to ensuring that your voice and image remain yours alone.

Do you want to delve deeper into the subject, have any questions, comments or want to share your opinion?

experience on this topic? Write to me on Instagram: @davisalvesphd.

*This text does not necessarily reflect the opinion of Jovem Pan.